Filebeats elastic search4/5/2023

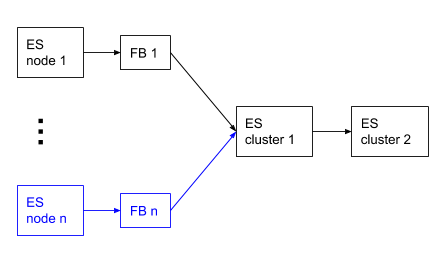

The total number of output workers in filebeat is given by (assuming loadbalance: true ): W = * len(hosts). The queue will block and accept new events only after Logstash did ACK a batch. That is, one worker can have up to 2 life batches. The logstash output by default operates asynchronously, with pipelining: 2. That is, in filebeat with filled up queues (which is quite normal due to back-pressure), you will have B = / _max_size batches. The output draws a batch of bulk_max_size from the queue. The queue is used to combine events into batches. In filebeat there is a memory queue (See: queue docs ), which collects the events from all harvesters. Simple logstash config for testing: input The pv-tool will print the throughput in number of lines = events per second to stderr. In test-config.yml we configure the console output: nsole: filebeat -c test-config.yml | pv -Warl >/dev/null Using pv, we can check filebeat throughput when using the console output: $. Have a separate filebeat test config, with separate registry file. I'd start testing how fast filebeat can actually process files on localhost. There are a few components that might create back-pressure. When trying to tune ingestion, try to identify the bottleneck first. Harvester limit controls how many files you process in parallel. I don't see how harvester limit should affect overall ingestion rates.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed