Filebeats s34/5/2023

Set logging.level to debug in config file for verbose output.

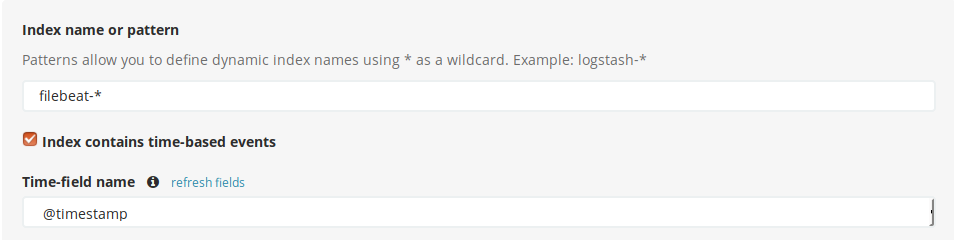

On FreeBSD it would be filebeat nfig /usr/local/etc /var/db/beats/filebeat -e To start Filebeat with stdout output, pass it -e option. The solution is to either add the “B” flag to newsyslog config or to add that line to exclude_lines in Filebeat config. This is very likely to cause the pipeline to return an error resulting in the above message in Filebeat logs and will stop further processing. Sending documents to Elasticsearch that the pipeline can’t process will result inĮRR Failed to publish events: temporary bulk send failureīSD’s newsyslog (log rotation system) might append a message at the end of a log it rotates saying that it was turned over and why. Then the filebeat version, so that versions that might conflict with each other send documents to different indexes.Ī note here. If that field is missing it will fall back to “logs”. It will use the “index_name” field, defined in each input. Configure an Elasticsearch output to send log records to Elasticsearch cluster directly.This will create an index with default settings, like five shards and one replica. The field mapping is defined in the pipeline, so we disable index templates. Because we use a custom index name we need to either define a custom template for it or tell Filebeat not to set the template at all.It excludes ELB health checks from the logs, adds a custom field called “index_name”, and sends to logs to their respecgive pipeline. Set inputs, of the log variety, to read Nginx log files.

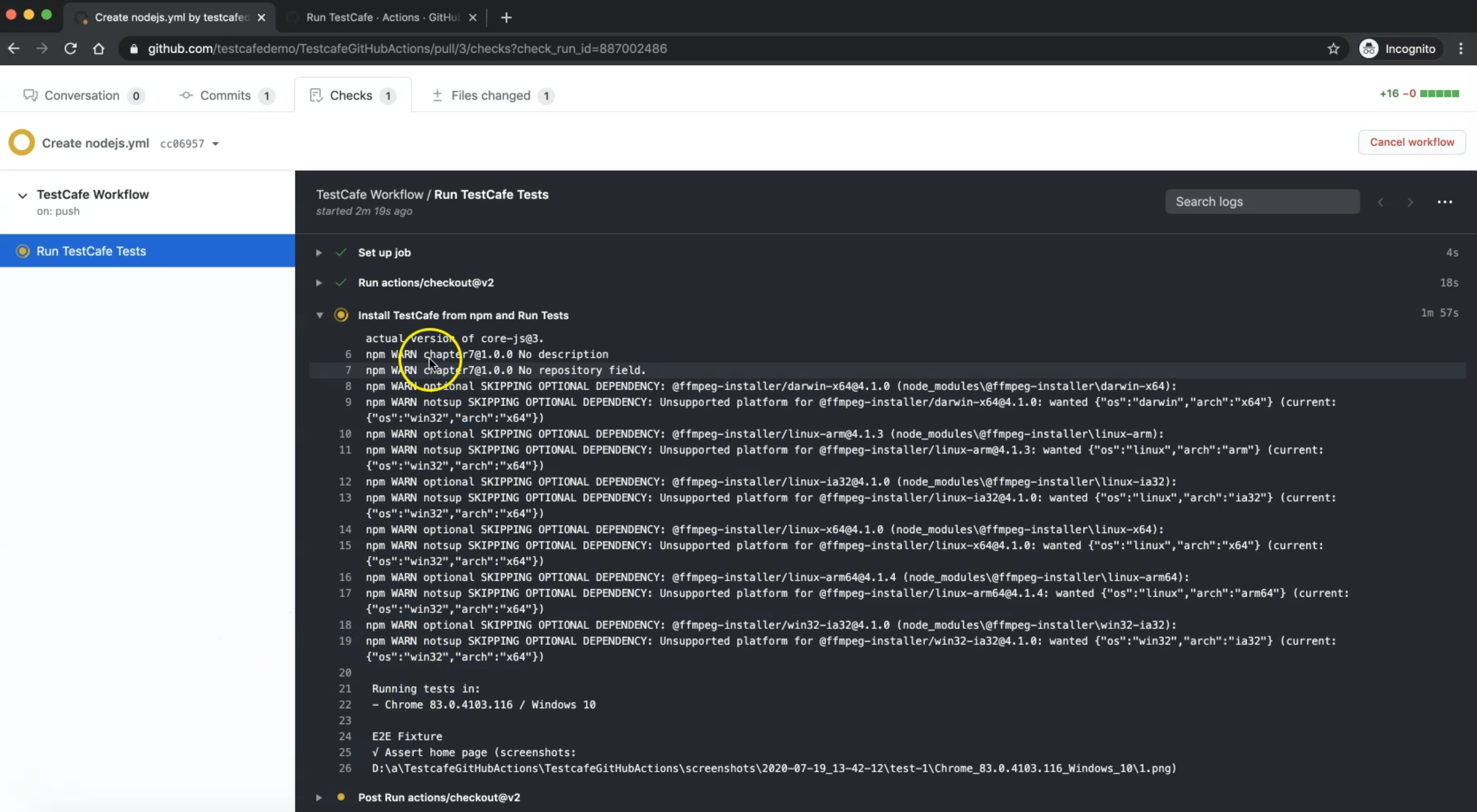

"""%" # Logging #logging.level: debug #lectors: įilebeat starts a harvester for each file configured in inputs section.Ī Filebeat configuration should have at least an input and an output section. "description": "Ingest pipeline for Combined Log Format", Create a pipeline for ingesting Nginx logs API calls below are presented in Console format. In order to get to Kibana on Amazon Elasticsearch, go to. One convenient way to do that is to use Kibana’s Console, under “Dev Tools”, in the left side menu. Interacting with Elasticsearch is done through API calls. Start Filebeat and confirm that it all works as expected.

Install and configure Filebeat to read nginx access logs and send them to Elasticsearch using the pipeline created above. For example, the first field is the client IP address. The pipeline will translate a log line to JSON, informing Elasticsearch about what each field represents. Define a pipeline on Elasticsearch cluster. We use the last two ingest methods to get logs into Elasticsearch. Practical example: nginx log ingestion using Filebeat and pipelines Or using Firehose to load logs into Elasticsearch. Like, a Lambda function that gets triggered when a log is uploaded to S3 or CloudWatch. Part of the Beats family of data shippers.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed